Opscotch AI/LLM Overview

Purpose

The Opscotch AI/LLM integration is a comprehensive, portable knowledge artifact designed to equip an LLM with deep operational knowledge of the Opscotch system. It provides explicit, structured technical knowledge that enables an LLM to effectively work with Opscotch configurations, workflows, and operations.

Quick Start

One off:

Each session:

- Run this prompt

- Run this prompt

What's Included

The skill covers:

- Bootstrap Configuration: Understanding and writing bootstrap files for runtime initialization, deployment settings, licensing, and security controls

- Workflow Design: Creating, composing, and debugging workflows with steps, triggers, processors, and data flow

- Runtime Operations: Starting, stopping, and managing the Opscotch runtime environment

- Packaging: Building, signing, encrypting, and deploying workflow packages

- Testing: Writing and running tests for workflow validation

- Administration: Security, licensing, cryptography, and operational best practices

The Public Opscotch MCP tools cover:

- Opscotch thinking guidance

- documentation

- schema and programming API discovery

- workflow and bootstrap validation

The Local Development MCP tools cover:

- resource compilation testing

- executing unit tests

- executing integration tests

How to Use

There are two ways to integrate Opscotch with an AI/LLM.

- Using the Public Opscotch MCP server (preferred)

- Using the raw prompts

Public Opscotch MCP

The Public Opscotch MCP server is the canonical MCP server for Opscotch-specific prompt loading, schema access, schema validation, operational-context resolution, and other MCP-exposed assistance.

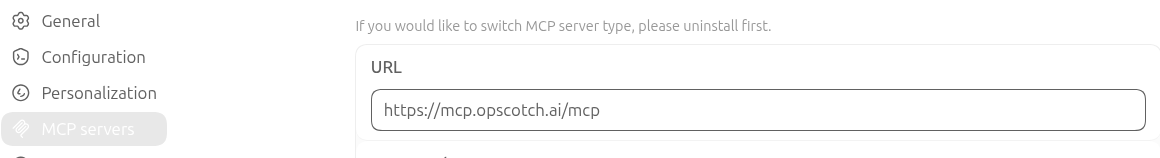

Add the Public Opscotch MCP server to your coding agent - this is different for each AI ecosystem (you'll need to do this yourself). The official endpoint is https://mcp.opscotch.ai/mcp (no authentication required because it is public).

Here is an example of adding it to OpenAI Codex:

Public MCP Skill Prompt

Once the MCP server is added to your agent, copy and paste this prompt:

We are working on an Opscotch app. It may be new and require planning, or it may be an existing app where we are adding a feature.

This is a skill-priming prompt: comprehend it completely before starting.

Always use the configured Public Opscotch MCP server at https://mcp.opscotch.ai/mcp, and prefer the configured MCP connection over ad hoc `curl` (or similar) commands when MCP access is available. The MCP server must already be configured in this agent; if it is not, stop and tell the operator. You may probe the url for liveliness, but don't fall back to using the non-MCP-configured mode.

At session start, call the JSON-RPC `ping` method through MCP and wait for the server to wake up; it may take a few tries. Favor tools over resource calls.

Bootstrap your understanding with tool calls in this order:

- `opscotch_get_guidance(role=system)`

- `opscotch_get_guidance(role=architect)`

- `opscotch_get_guidance(role=engineer)`.

Use the Public Opscotch MCP whenever it can answer, inspect, resolve, or validate a question more directly than unsupported reasoning.

After guidance is loaded, tell the operator: "Opscotch planning and designing skills are loaded and ready. What are we working on?" The operator may not know much about Opscotch, so provide a few suggestions for what they can ask next. If the operator starts talking about implementation, ask them to prime the Local Development MCP (reference: https://docs.opscotch.co/docs/current/llm/overview/#local-development-mcp)

Local Development MCP

The Local Development MCP is your AI execution bridge into your real local project.

The Public Opscotch MCP helps the AI reason correctly about architecture, schemas, and validation rules. The Local Development MCP lets the AI run local checks against your mounted code, tests, and fixtures.

In practice, this means your AI can do more than suggest changes. It can validate paths, run resource unit tests, run workflow integration tests, and execute resource scripts in a controlled runtime that matches your mounted workspace.

For a reliable development loop, treat Local Development MCP as part of initial setup, not as an optional add-on. Configure it at the start of a session so the AI can move directly from design decisions to verified execution results.

What it is:

- local-only MCP server running in Docker

- image:

ghcr.io/opscotch/opscotch-local-development-mcp:latest - endpoint inside the container:

GET /mcpandPOST /mcp

Required environment:

OPSCOTCH_LEGAL_ACCEPTED(use the base64 acceptance blob as described in Running the runtime in Docker)OPSCOTCH_LOCAL_MCP_WORKSPACE_ROOTOPSCOTCH_LOCAL_MCP_LICENSE_ROOTOPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT

These should typically be set to:

OPSCOTCH_LOCAL_MCP_WORKSPACE_ROOT=/workspaceOPSCOTCH_LOCAL_MCP_LICENSE_ROOT=/licenseOPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT=/artifacts

Artifacts directory

artifacts is the local MCP output area. It is for files generated during execution, not for source files you edit.

Typical artifact contents include:

- run logs

- test output and summaries

- generated run metadata

- other execution-time debug outputs

OPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT=/artifacts defines a writable location for these outputs. In Docker, mount it to a host directory you can inspect after runs, for example:

-v /tmp/opscotch-local-mcp-artifacts:/artifacts

Start the local MCP server

Here is an example command to start the Local Development MCP server.

export OPSCOTCH_LEGAL_ACCEPTED='<base64-acceptance-blob>'

docker run --rm -p 8080:8080 \

-e OPSCOTCH_LEGAL_ACCEPTED \

-e OPSCOTCH_LOCAL_MCP_WORKSPACE_ROOT=/workspace \

-e OPSCOTCH_LOCAL_MCP_LICENSE_ROOT=/license \

-e OPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT=/artifacts \

-v /home/jeremy/dev/opscotch/community/opscotch-community:/workspace:ro \

-v /path/to/license-dir:/license:ro \

-v /tmp/opscotch-local-mcp-artifacts:/artifacts \

ghcr.io/opscotch/opscotch-local-development-mcp:latest

If you want the AI to generate a ready-to-run Docker command for your machine, copy and paste this prompt:

Generate a `docker run` command to start `ghcr.io/opscotch/opscotch-local-development-mcp:latest` on my machine.

Requirements:

- expose port 8080

- include `OPSCOTCH_LEGAL_ACCEPTED` as the base64 acceptance blob described in [Running the runtime in Docker](../administrating/agent#running-the-runtime-in-docker)

- set `OPSCOTCH_LOCAL_MCP_WORKSPACE_ROOT=/workspace`

- set `OPSCOTCH_LOCAL_MCP_LICENSE_ROOT=/license`

- set `OPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT=/artifacts`

- mount my workspace directory to `/workspace` (read-only) - the workspace must contain all the files the operator wants to work with.

- mount my license directory to `/license` (read-only)

- mount a writable host directory to `/artifacts`, use a temp directory is not specified

Return:

1. the exact `export OPSCOTCH_LEGAL_ACCEPTED=...` line I should run first, using the base64 acceptance blob

2. one complete `docker run` command I can copy and paste

3. a short example of what to set as workspace, license, and artifacts paths

Do not return placeholders like `/path/to/...` in the final command unless I have not provided a path.

Ask me only for missing paths.

Here is an example:

docker run --rm -p 8080:8080 \

-e OPSCOTCH_LEGAL_ACCEPTED='<base64-acceptance-blob>' \

-e OPSCOTCH_LOCAL_MCP_WORKSPACE_ROOT=/workspace \

-e OPSCOTCH_LOCAL_MCP_LICENSE_ROOT=/license \

-e OPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT=/artifacts \

-v /path/to/users/workspace:/workspace:ro \

-v /path/to/license-dir:/license:ro \

-v /tmp/opscotch-local-mcp-artifacts:/artifacts \

ghcr.io/opscotch/opscotch-local-development-mcp:latest

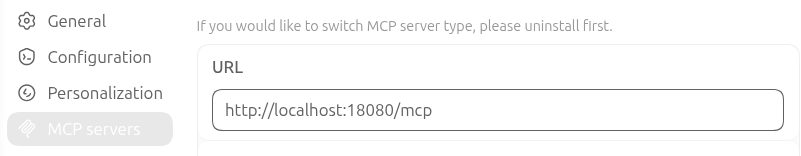

Then configure your AI client to use http://localhost:8080/mcp. This is different for each AI ecosystem (you'll need to do this yourself)

Here is an example of adding it to OpenAI Codex:

Local Development MCP Skill Prompt

Once your local development server is up and running, prime your agent with this skill prompt:

We are working on an Opscotch app in a local development environment. This is a skill priming prompt: comprehend it completely before starting.

Always use the configured Opscotch Local Development MCP server for execution-time work (tests, script execution, and local path diagnostics). Prefer the configured MCP connection over ad hoc shell commands when MCP tools can do the job. If the local MCP server is not configured in this agent, stop and tell the operator.

The Opscotch Local Development MCP server requires the Public Opscotch MCP server at https://mcp.opscotch.ai/mcp - check to see if this has been configured as a MCP server first. If it has not, refer the operator to https://docs.opscotch.co/docs/current/llm/overview/#public-opscotch-mcp

At session start, bootstrap with local MCP tools in this order:

1) `get_guidance(role=system)`

2) `check_local_test_capabilities()`

3) `get_guidance(role=workflow)` or `get_guidance(role=resource)` based on the task

Before running execution tools, verify paths and mounts:

- Use logical roots from `OPSCOTCH_LOCAL_MCP_WORKSPACE_ROOT`, `OPSCOTCH_LOCAL_MCP_LICENSE_ROOT`, and `OPSCOTCH_LOCAL_MCP_ARTIFACT_ROOT`

- Prefer root-relative tool paths

- Include `hostMounts` in tool input so host absolute paths can be translated reliably

- If paths are unclear, call `diagnose_resource_unit_test_path` first

Execution rules:

- Run exactly one execution tool at a time

- Use `run_resource_unit_tests` for resource unit tests (`unit-tests/*.test.ts`)

- Use `run_workflow_integration_test` for workflow integration tests

- Use `run_resource_script` only when script probing/execution is explicitly needed

Use the Public Opscotch MCP (https://mcp.opscotch.ai/mcp) for design-time reasoning (architecture, schemas, and validation strategy), and the Local Development MCP for execution against mounted local files.

After loading local guidance and checking capabilities, tell the operator:

"Local Opscotch development tools are loaded and ready. What should we run first?"

How tool paths work (important)

When paths are passed in tools, they are resolved from the mounted docker logical root, not from your host filesystem assumptions.

If you mounted:

-v /home/jeremy/dev/opscotch/community/opscotch-community:/workspace:ro

Use:

{"root":"workspace","path":"unit-tests/general/debug-print-body.test.ts"}{"root":"workspace","path":"tests/general/httpserver.test.json"}

Do not prefix with community/opscotch-community/ in that case because it is already mounted as /workspace.

Prefer including hostMounts in tool input so host absolute paths are translated reliably.

Local MCP tools

get_guidance(role:system,workflow, orresource)check_local_test_capabilitiesdiagnose_resource_unit_test_pathrun_workflow_integration_testrun_resource_unit_testsrun_resource_script

Recommended call order:

get_guidance(role=system)check_local_test_capabilities()get_guidance(role=workflow)orget_guidance(role=resource)diagnose_resource_unit_test_path(...)when unsure about paths- run one execution tool at a time

Execution constraints:

run_resource_unit_testsexpects test files underunit-tests/matching*.test.tsrun_workflow_integration_testexpects a valid workflow test file and license path under mounted roots

Direct skill files

The skill files are designed to be dropped into an LLM as a system-level skill or knowledge file. They provide:

- Explicit field definitions and requirements

- Schema-backed structural guidance

- Operational procedures and examples

- Architectural reasoning patterns

- Guidance for using the Public Opscotch MCP as the canonical source of MCP-exposed Opscotch resources and tools

Skill File Structure

- opscotch-llm-skill.md - The complete public skill document

- opscotch-architect-skill.md - A complementary design-focused skill for architectural thinking

- opscotch-operations-skill.md - A complementary operations-focused skill for start/run/execute questions

- /llm/apireference-index.json - The public API reference index for normal workflow execution

- /llm/apireference.json - The public API reference detail for normal workflow execution

- /llm/patterns.json - The public pattern reference detail

Schema Files

JSON schema files define the structure and validation rules for Opscotch configuration files. These schemas provide explicit field definitions and requirements for configuration authoring:

- Bootstrap schema - Configuration for runtime initialization, deployment settings, licensing, and security controls

- Workflow schema - Structure for workflow definitions including steps, triggers, processors, and data flow

- Test Runner schema - Configuration for test execution and validation